The RAG API

Upload, Search, Reason

Production-ready multimodal RAG. One API call. No GPUs to manage, no parsers to babysit, no vector DB to scale.

REST API

OpenAPI V3

LLM Agnostic

SOC 2 Type 1

HOW IT WORKS

Raw Document → Grounded Answer

LightOnOCR-2 • 1B

Ingest & Parse

Bulk-ingest your document base through a single API call. Sync from SharePoint, Teams, or ServiceNow. The parsing engine scores 83.2 on OlmOCR-Bench — beating every system evaluated, including models 9× its size.

NextPlaid • Rust • CPU-optimized

Chunk & Index

Intelligent splitting preserves context. The multi-vector database indexes each chunk with token-level precision finding the exact paragraph in a 200-page manual, not just "a related document.

LateOn • Multi-vector Retrieval

Retrieve & Rerank

Candidate pages are retrieved, then individually evaluated. Out of Pages 3, 4, and 6: "Page 3 relevant. Page 4 no. Page 6 yes." Only pertinent content reaches the LLM. Noise eliminated.

Any LLM • Grounded Output

Reason & Respond

Filtered context is sent to the LLM of your choice. Pick any model based on your privacy, cost, or performance requirements: open-source, commercial, or private.

The hardest part isn't building a RAG pipeline. It's keeping it current. We handle every upgrade, every migration, every month. Your integration stays stable. Your product stays ahead.

Buy vs. Build

You've Built Internal RAG

Now You Need It to Scale

Now You Need It to Scale

Parser maintenance. Model updates. Chunking edge cases. Vector DB scaling. That's infrastructure work, not product work.

Offload the plumbing. Keep the control.

Without LightOn API

Your current reality

Ongoing Maintenance

Parsers, OCR, chunking logic...

Custom ACL Implementation

Synced with your IAM

GPU Scaling

And inference optimization

Model Upgrades

Regression testing

With LightOn API

Your potential reality

Universal Ingestion Endpoint

PDF, Office, scans, HTML. We handle extraction and semantic chunking.

Native Workspace & Collection Isolation

SSO/SAML integration, audit logs out of the box.

Managed Pipeline

Optimized for low-footprint deployment (on-prem or private cloud).

Versioned API V3

Backward compatibility, controlled rollout.

Why LightOn API

What You Get That

You Can't Build or Buy Elsewhere

You Can't Build or Buy Elsewhere

Features

DIY RAG

Legacy Search

Cloud RAG

LightOn API

Complex tables & diagrams

Breaks

Text only

Basic

OCR-2 native

Search precision

Single vector

Keywords

Single vector

Multi-vector

On-premise / air-gapped

Complex DIY

Possible

Cloud only

3 GPUs

ACL enforcement

DIY

Partial

Varies

Doc-level

LLM flexibility

Locked

N/A

Vendor

Agnostic

Time to production

6-12 months

2-3 months

1-2 months

Now

Hardware Efficiency

Maximum Intelligence

Minimum Hardware Footprint

Minimum Hardware Footprint

Enterprise AI shouldn't require a nuclear power plant.

Our Search is engineered to run on constrained infrastructures (On-Premise or Private Cloud), maximizing the "Performance per Token" ratio.

Our Search is engineered to run on constrained infrastructures (On-Premise or Private Cloud), maximizing the "Performance per Token" ratio.

Optimized

We build and fine-tune specific Models to RAG tasks.

Quantization Mastery

High-speed inference with low VRAM usage.

Cost-Efficient Scaling

Scale your API usage without linearly exploding your GPU budget.

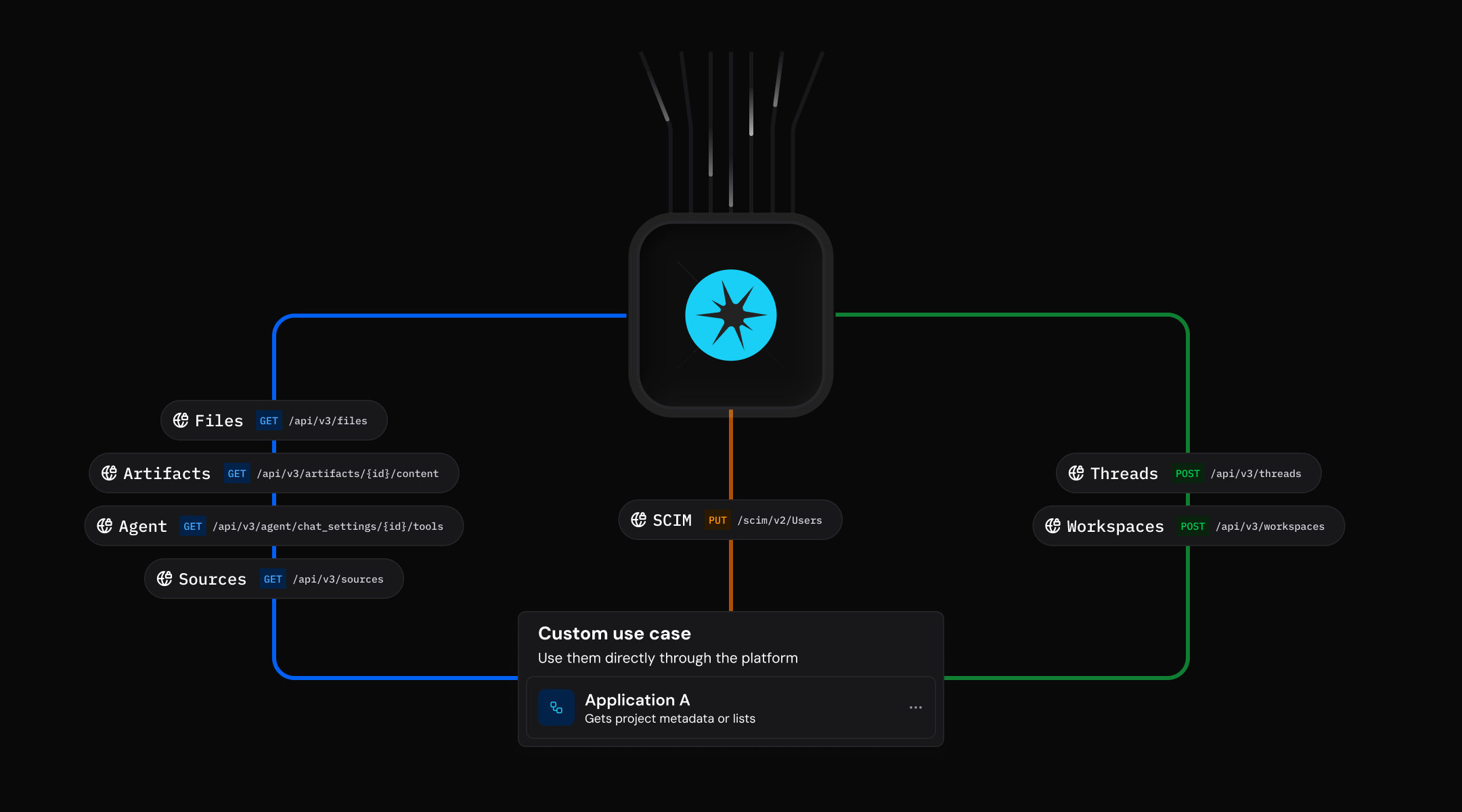

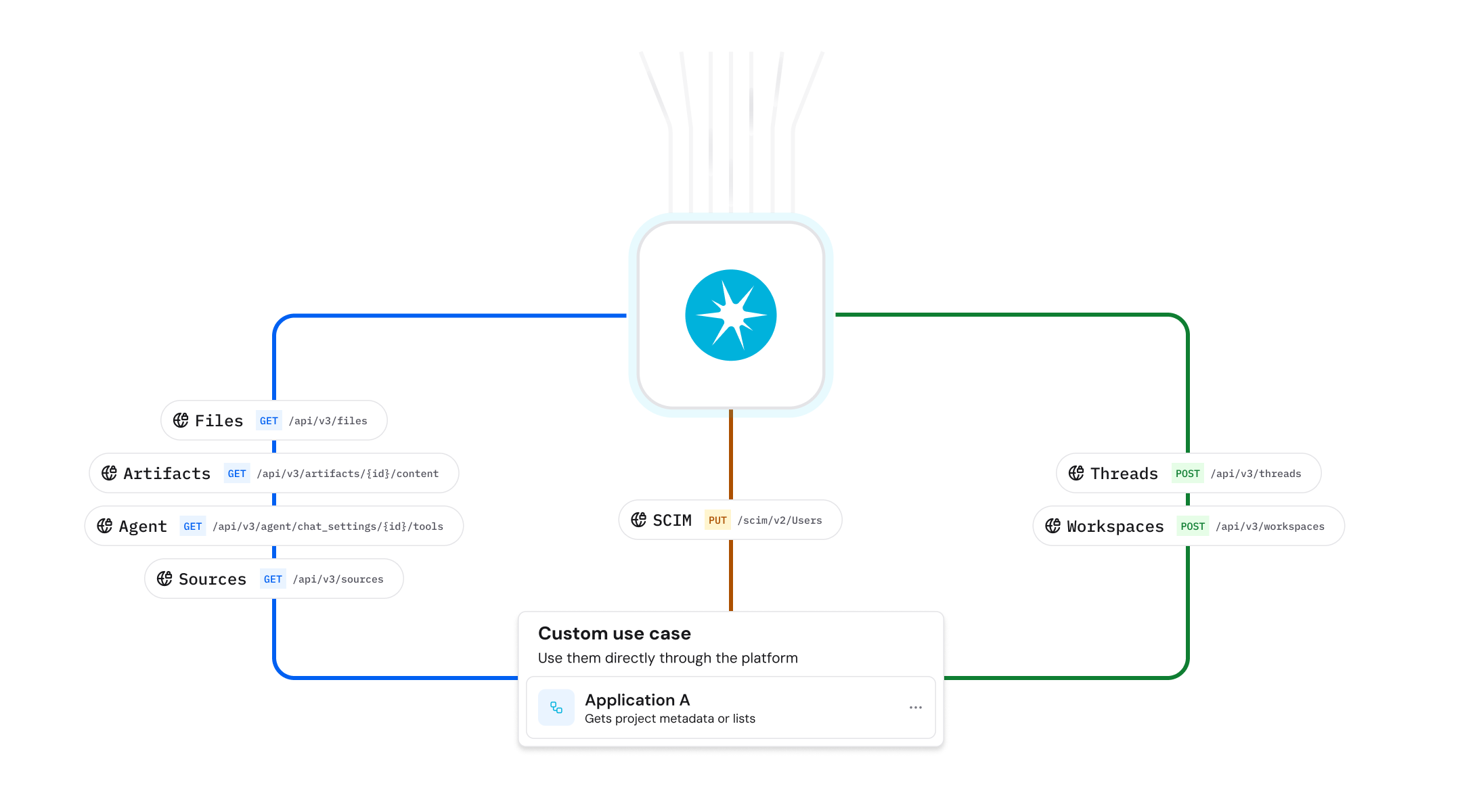

Developers use cases

What Will You Build?

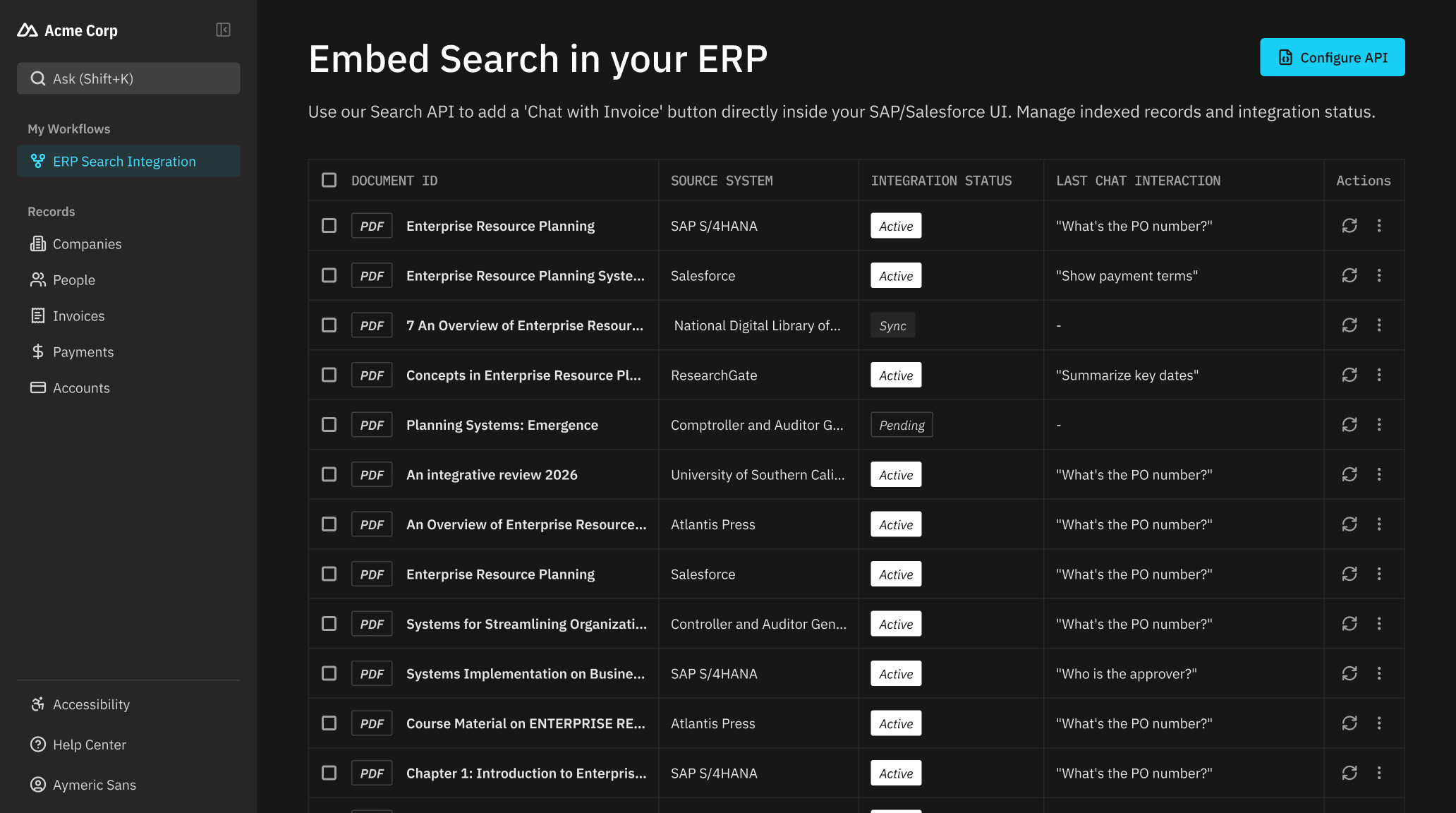

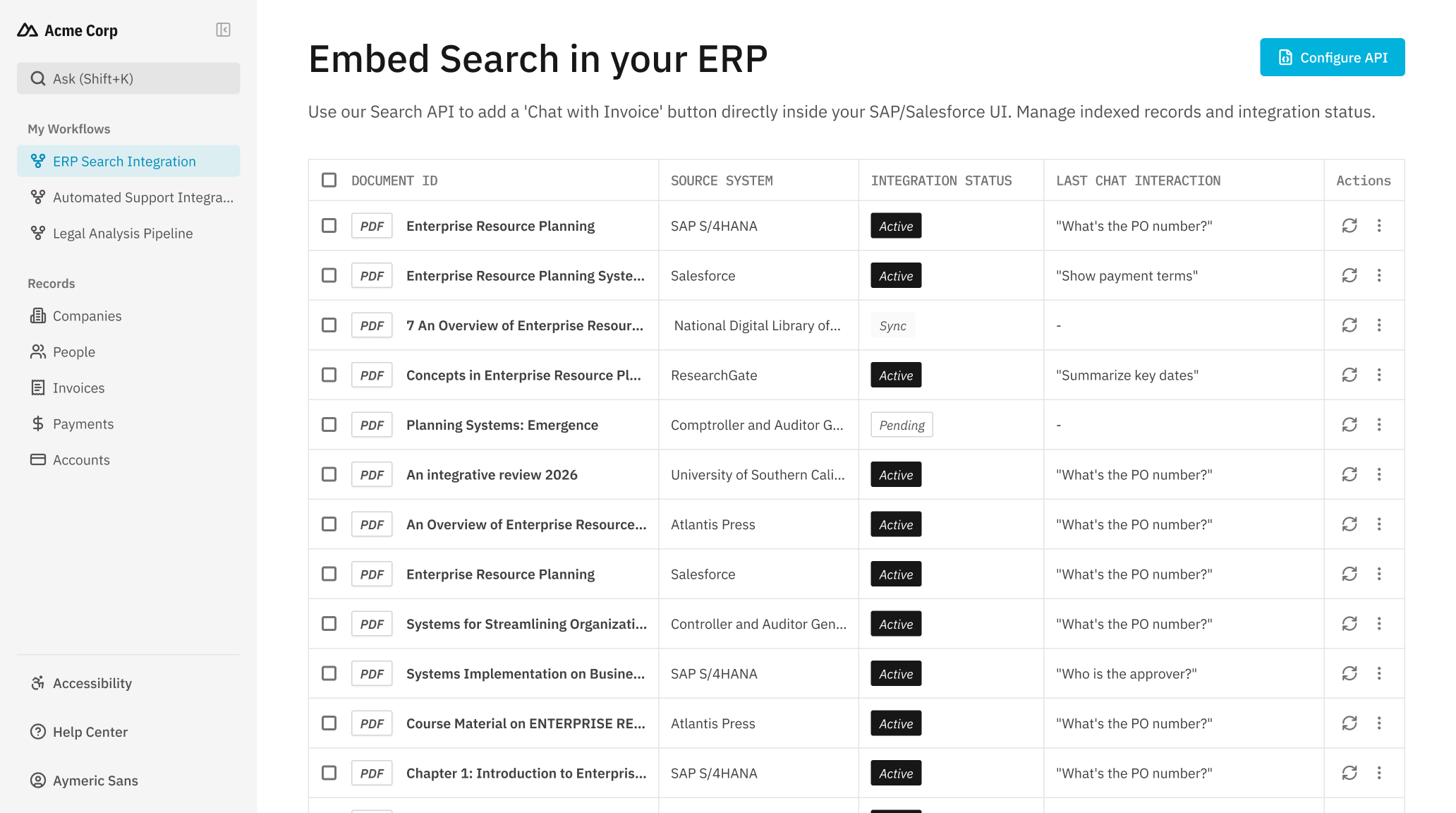

Embed Search in your ERP

Functionality. Use our Search API to add a "Chat with Invoice" button directly inside your SAP/Salesforce UI.

Embed Search

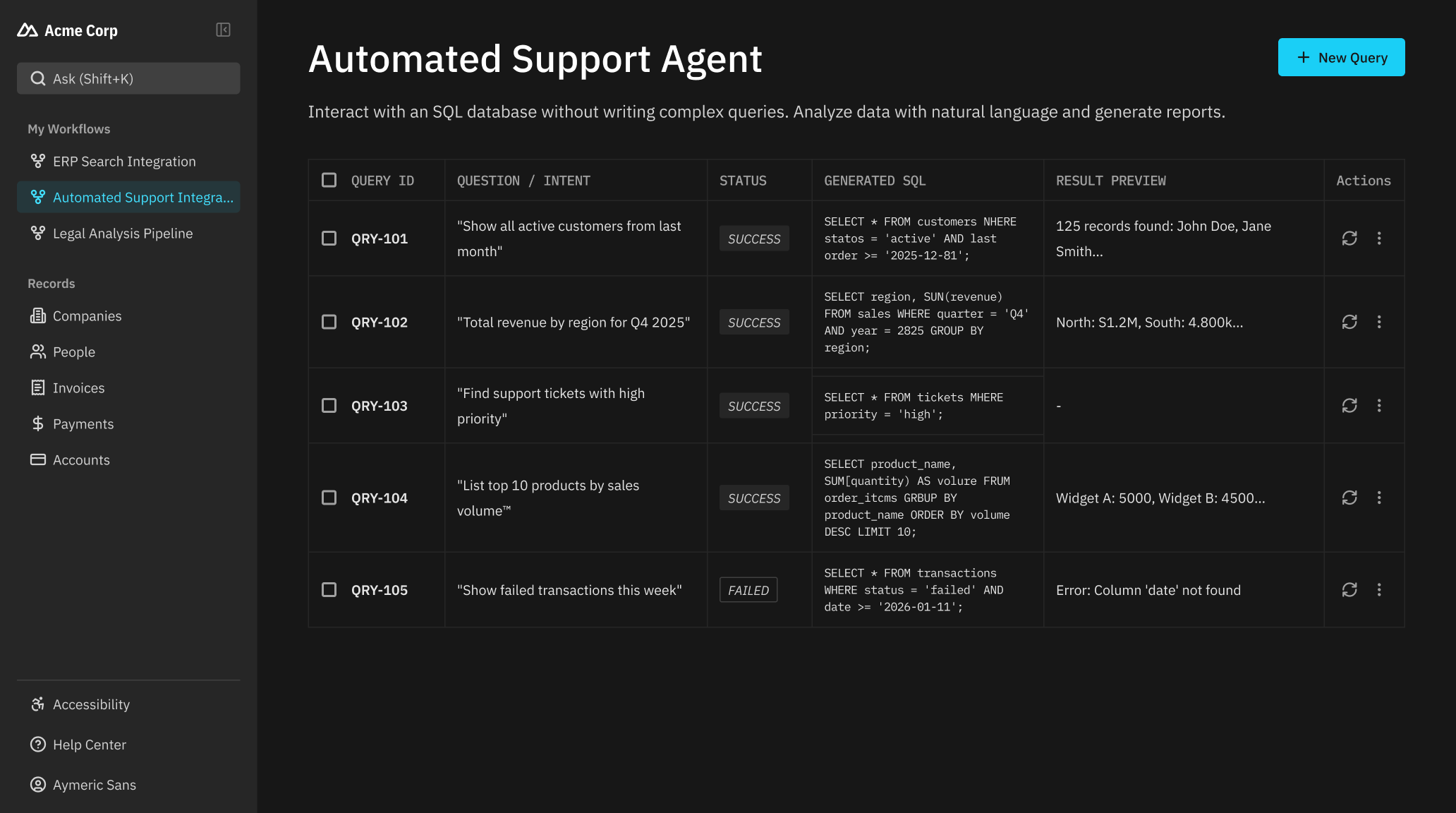

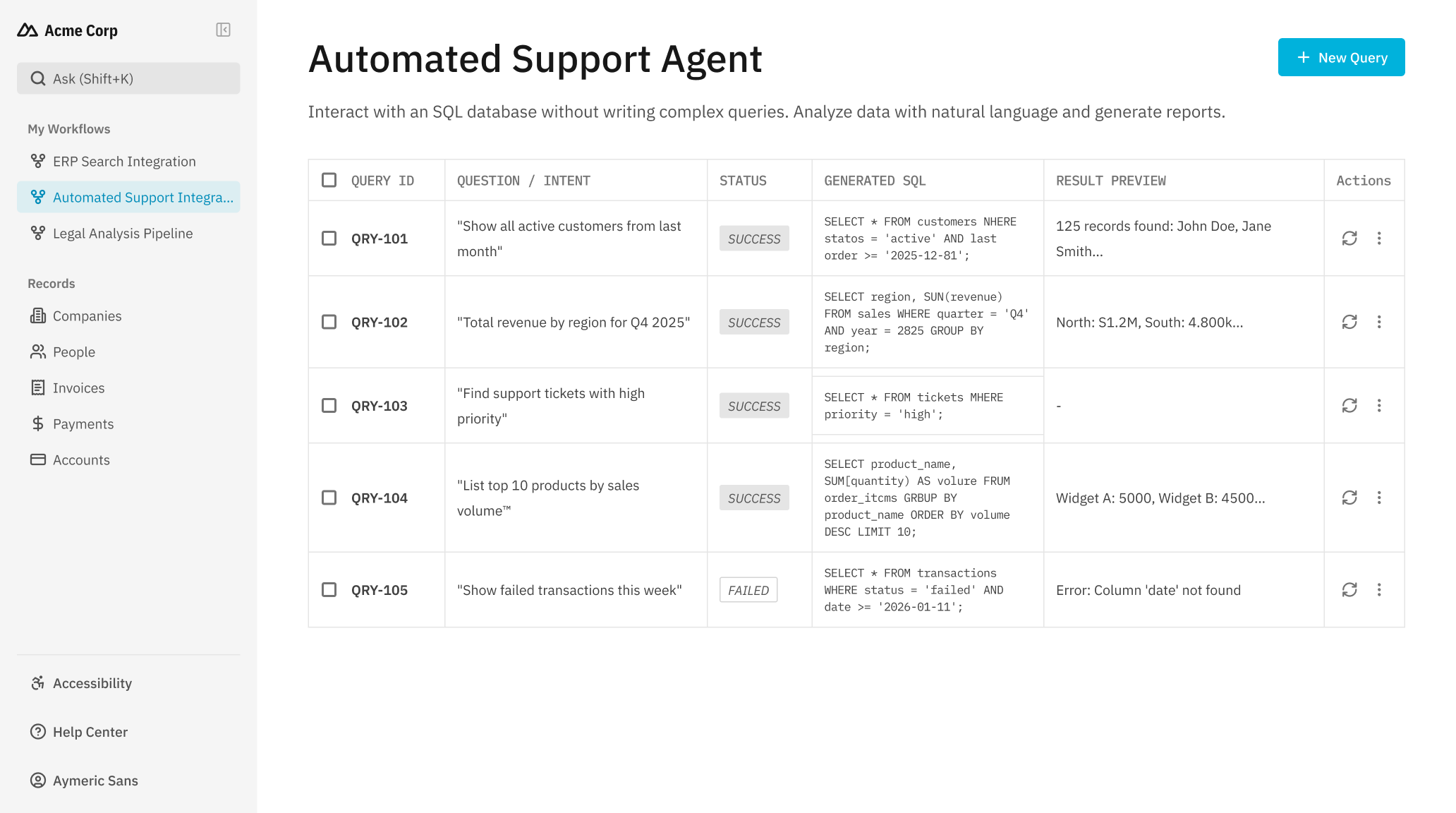

Automated Support Agent

Functionality. Interact with an SQL database without writing complex queries.

Automated Support Agent

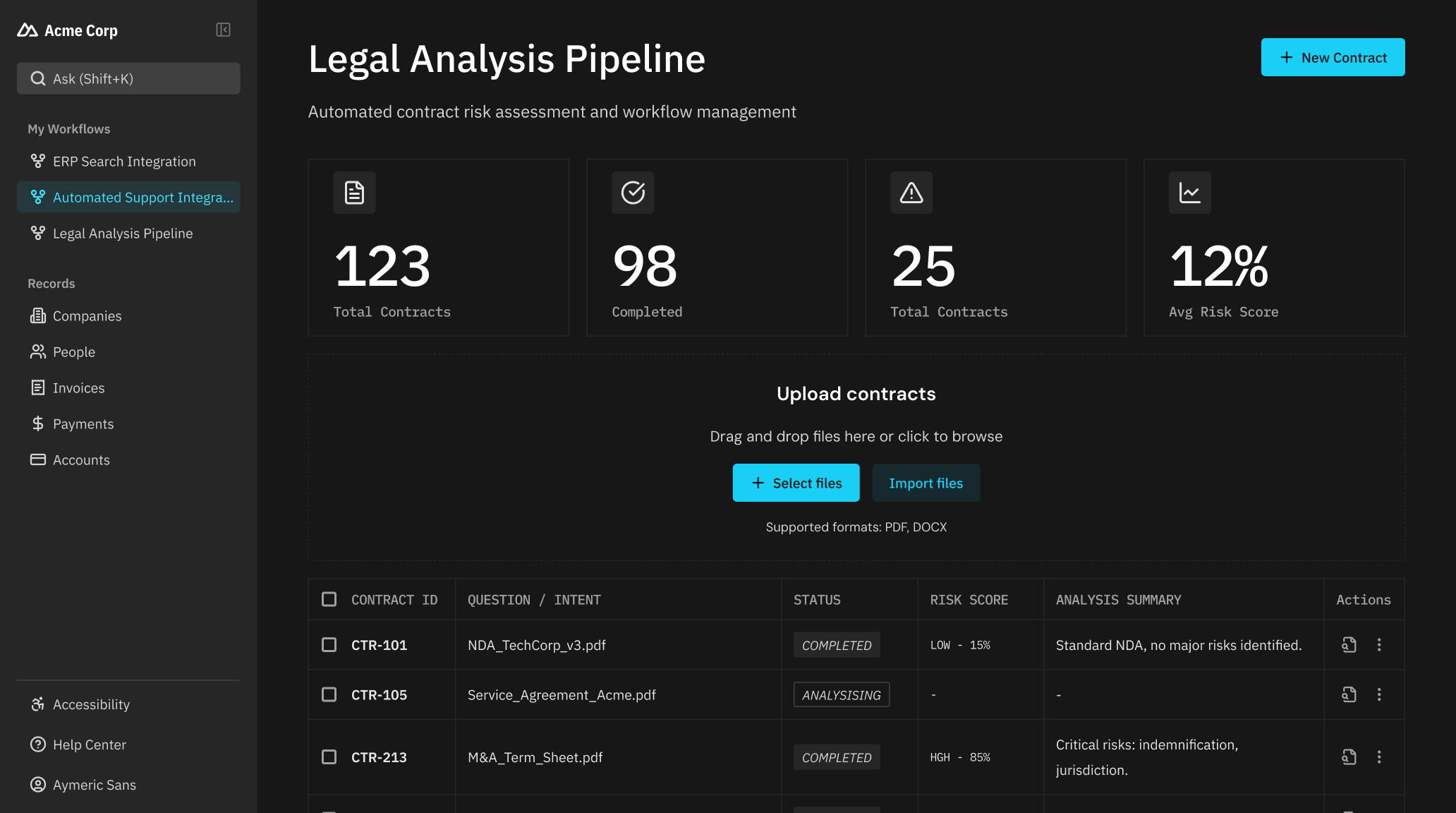

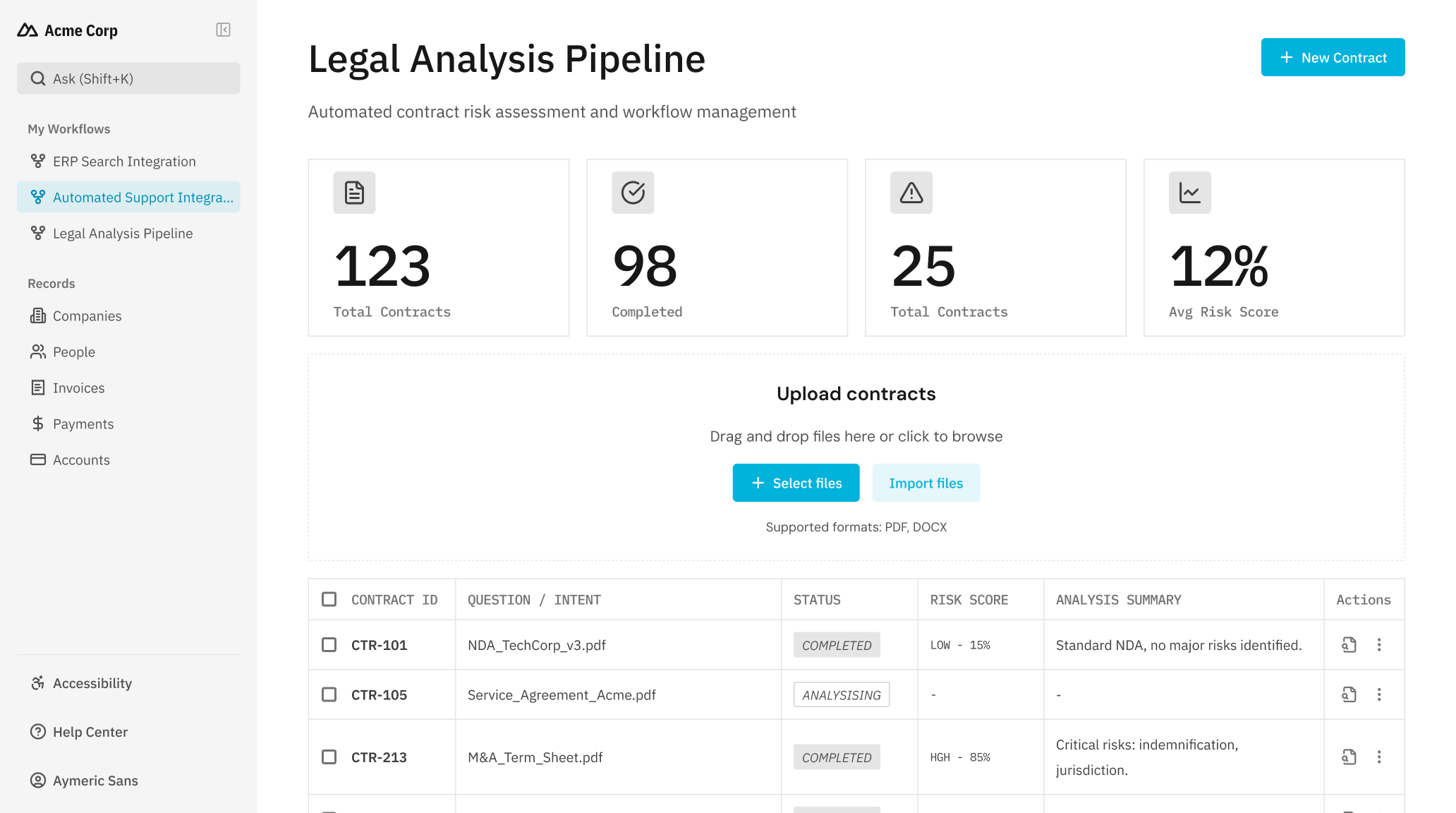

Legal Analysis Pipeline

Functionality. Create a workflow that uploads contracts to a secure Workspace and triggers a risk analysis prompt automatically.

Legal Analysis Pipeline

Developer Experience

Built by Developers,

for Developers

for Developers

Comprehensive Documentation

Full Swagger/OpenAPI V3 specs, "Quick Start" guides, and detailed recipes in the Paradigm Academy.

Standard Protocols

RESTful architecture easy to consume from Python, Node.js, Java, or Go.

Native Extensibility

Need to connect to live tools? The API supports the Model Context Protocol (MCP) to give the AI access to your internal APIs.

deployment

Deploy with Confidence

Security & Compliance

Key Certification (SOC 2 Type 1)

Flexible Hosting (Private Cloud, On-Premise, Air-Gapped)

Audit & Traceability (Complete activity tracking)

Access Management & Integration

Single Sign-On (SSO) & SCIM

Fine-Grained Permissions (ACL) (Per user and group)

Group Synchronization

Control & Advisory

Budget Control (Flat and predictable pricing)

Advanced Customization (Adapted to your specific needs)

Dedicated Expert Support (Implementation assistance)

Don’t just take our word for it

Hear from some of our amazing customers who are building faster

The expertise of their tech team and the rapid evolution of the product, such as the hybrid search feature, put them at the forefront of innovation.

Jérôme Lacaille

Emeritus Expert in Algorithms

Emeritus Expert in Algorithms

%201.png)

Babbar needed an efficient SEO strategy enhancement through LLM technology to stay competitive in the dynamic SEO industry.

Sylvain Peyronnet

Co-founder & search engine specialist

Co-founder & search engine specialist

LightOn responded very quickly with tools that perfectly matched our needs, enhancing our document base and onboarding users without experience.

Achille Lerpinière

Chief Information & Technology Officer

Chief Information & Technology Officer

Built on Open Research.

Tested and trusted by the community.

Tested and trusted by the community.

42,060,936

Model downloads on Hugging Face

ModernBERT

LightOnOCR-2

GTE-ModernColBERT

LateOn-code

ColBERT-Zero

OriOn

...

3,239

Hugging Face likes

ModernBERT

LightOnOCR-2

GTE-ModernColBERT

LateOn-code

ColBERT-Zero

OriOn

...

2,035

Github stars

PyLate

NextPlaid

FastPlaid

ColGrep

PyLate-rs

...

534,000

PyPi downloads

PyLate

NextPlaid

FastPlaid

ColGrep

PyLate-rs

...

.svg)